Writing to CSV-file from multiple threads

I was writing document and metadata exporter that reads data from SharePoint and writes it to multiple files. I needed to boost up performance of my exporter and I went with multiple threads pumping out the data from SharePoint. One problem I faced – writing metadata to CSV-files from multiple threads in parallel. This blog post shows how to do it using concurrent queue.

This posting uses CsvHelper library to write objects to CSV-files. Last time I covered this library in my blog post Generating CSV-files on .NET.

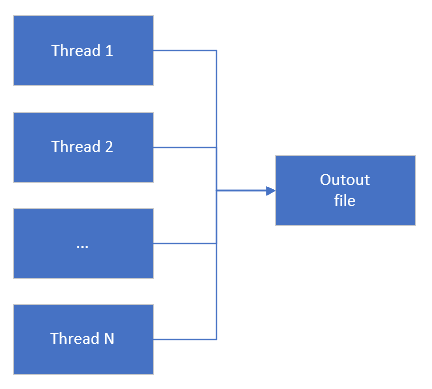

Problem: writing to file from multiple threads

I have multiple threads that traverse document library on SharePoint and I need to generate some reports on the fly. I have no option to add data to some buffer and flush it to files in the end of project as server memory is limited.

What I need is some thread-safe list I can read from another thread and where worker threads can add their DTO-s.

I had some considerations:

- I don’t want to bind the code too tight to some logging framework that can do a job.

- I need some point in code where I can control reading and writing of data.

- Instead of primitive custom code with thread locking I prefer something provided by framework.

With these ideas in my mind I started building my solution.

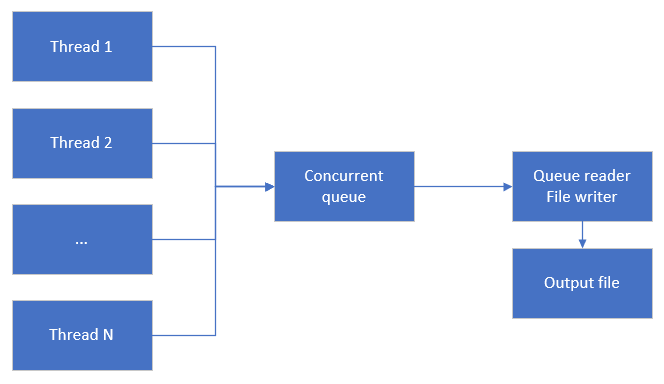

Using ConcurrentQueue<T>

I solved the problem using ConcurrentQueue<T>. Threads that gather data from SharePoint document library add Data Transfer Objects (DTO) to concurrent queue. I don’t have to worry about threading issues as this is why concurrent queue is created for. I also added thread that reads from concurrent queue and writes DTO-s to CSV file.

I wrote example console application to illustrate my solution. It’s easy to try out. Sorry for messy code.

class Product

{

public int Id { get; set; }

public string Name { get; set; }

public double Price { get; set; }

}

class Program

{

private static ConcurrentQueue<Product> _products = new ConcurrentQueue<Product>();

static void Main(string[] args)

{

var source = new CancellationTokenSource();

var token = source.Token;

Task.Run(() => {

var conf = new Configuration();

conf.Encoding = Encoding.UTF8;

conf.CultureInfo = CultureInfo.InvariantCulture;

using (var stream = File.OpenWrite("products.txt"))

using (var streamWriter = new StreamWriter(stream))

using (var writer = new CsvWriter(streamWriter, conf))

{

writer.WriteHeader<Product>();

writer.NextRecord();

while (true)

{

if(token.IsCancellationRequested)

{

streamWriter.Flush();

return;

}

Product product = null;

while(_products.TryDequeue(out product))

{

writer.WriteRecord(product);

writer.NextRecord();

}

}

}

}, token);

var task1 = Task.Run(() =>

{

foreach(var number in Enumerable.Range(1, 10))

{

var product = new Product

{

Id = number,

Name = "Product " + number,

Price = Math.Round((10d * number) / DateTime.Now.Second, 2)

};

_products.Enqueue(product);

Task.Delay(150).Wait();

}

});

var task2 = Task.Run(() =>

{

foreach (var number in Enumerable.Range(11, 10))

{

var product = new Product

{

Id = number,

Name = "Product " + number,

Price = Math.Round((10d * number) / DateTime.Now.Second, 2)

};

_products.Enqueue(product);

Task.Delay(150).Wait();

}

});

Task.WaitAll(task1, task2);

Console.WriteLine(Environment.NewLine);

Console.WriteLine("Press any key to exit ...");

Console.ReadKey();

source.Cancel();

}

}

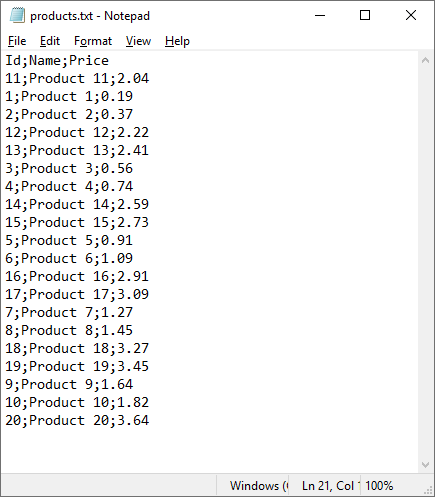

This code does its job well in my case. Here’s the CSV file with products information.

Although I went with default settings it’s easy to configure CsvWriter to use settings one needs.

What if SpinWait is too agressive?

Some of my dear readers my point out one thing – isn’t concurrent queue reading too aggressive? Well, there’s no better answer than usual “it depends”. Internally ConcurrentQueue<T> uses SpinWait and this should be enough in my case. Still when it runs empty it consumes 25% of CPU. SpinWait is okay when items are added to queue frequently.

If SpinWait in concurrent queue is too agressive then it’s possible to calm it down a little bit when queue is empty. I added 500 milliseconds delay.

Task.Run(async () => {

var conf = new Configuration();

conf.Encoding = Encoding.UTF8;

conf.CultureInfo = CultureInfo.InvariantCulture;

using (var stream = File.OpenWrite("products.txt"))

using (var streamWriter = new StreamWriter(stream))

using (var writer = new CsvWriter(streamWriter, conf))

{

writer.WriteHeader<Product>();

writer.NextRecord();

while (true)

{

if(token.IsCancellationRequested)

{

streamWriter.Flush();

return;

}

Product product = null;

while(_products.TryDequeue(out product))

{

writer.WriteRecord(product);

writer.NextRecord();

}

// No data, let's delay

await Task.Delay(500);

}

}

}, token);

In my case it calmed concurrent queue reading down. Instead on 25% of CPU it stays near 3% when concurrent queue is empty.

Wrapping up

Instead of inventing custom mechanisms to handle concurrent writes to file from multiple threads we can use already existing classes and components. CsvHelper is great library with excellent performance. ConcurrentQueue<T> class helped us to take control over file writing to tune CPU usage during data exports. In the end we have simple and easy to extend solution we can also use in other projects.

Have you considered using TPL DataFlow or System.Threading.Channels?

System.Threading.Channels is also good option. Using concurrent collections results in more familiar code for majority of developers.

You did not mention how much faster you did get with the concurrent CSV writer. I would suspect none or not much because currently your code is allocation and hence GC bound. As long as you allocate so many objects there is little point in adding more threads. Things would get better if you use concurrent GC which is a must if you are allocating too much data on many threads.

It’a not a secret how much faster my code got. Before moving it to processing top level branches in parallel the whole export on one document repository took around 6h. After going parallel the time decreased to 1.5 hours. So, it’s 4x times better than before.

Did you consider using BlockingCollection? Or does that have the same SpinWait issue because it uses the ConcurrentQueue by default? At least it should allow you to remove the while(true) loop I think.

BlockingCollection is another option to consider. It needs some existing collection on what it implements blocking. I need while loop because depending on situation I maybe need to calculate more accurate waiting time.