Universal hierarchy traversing in C#

I started playing with small idea about how to go through document repository on SharePoint using more universal approach than just piling code to using-blocks and methods that depend on these. My goal was to separate in code hierarchy traversing logic from document exporting logic so I can use traversing part also in other projects on different types of hirarchies. Here is my nice and clean solution.

Hierarchy traverser

When working out my solution I suddenly got to my safe waters – generalizing out something universal I can use also for other projects that deal with hierarchical data. After some coding I got my current implementation done. Ladies and gentlemen – meet my new invention called HierarchyTraverser!

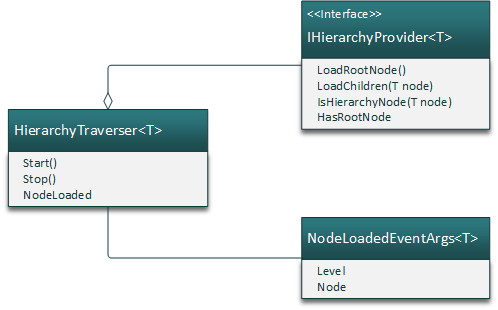

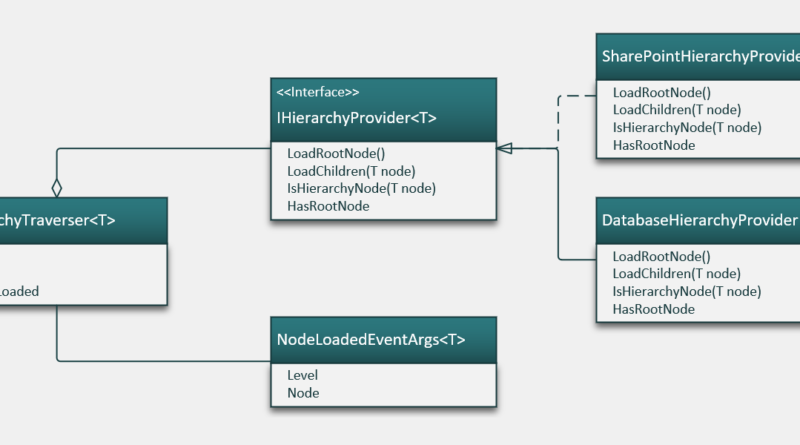

My solution contains one interface and two classes.

- IHierarchyProvider<T> – interface for classes that provide hierarchy loading functionalities and know about hierarchies to be traversed. Class following this interface is used by HierarchyTraverser class.

- HierarchyTraverser<T> – class that does actual work on traversing hierarchies. It uses IHierarchyProvider<T> to step through hierarchies.

- NodeLoadedEventArgs<T> – event fired by HierarchyTraverser<T> to handle control over to client after hierarchy node is loaded.

Let’s start with event arguments class and hierarchy provider.

public class NodeLoadedEventArgs<T>

{

public T Node { get; set; }

public int Level { get; set; }

}

public interface IHierarchyProvider<T>

{

bool HasRootNode { get; }

T LoadRootNode();

IList<T> LoadChildren(T node);

bool IsHierarchyNode(T node);

}

The idea of hierarchy provider is to hide details of hierarchy implementation to classes that follow this interface. My solution doesn’t want to know any dirty details about hierarchies except one thing – is there root node or not. If there’s no root node then null in provider calls is considered to be root node. This is the case with menu tables in some databases where all first level menu items have null for parent level id.

Traverser class is longer but not too long. Here is hierarchy traversing actually done.

public class HierarchyTraverser<T>

{

public delegate void NodeLoadedEventHandler(object sender, NodeLoadedEventArgs<T> e);

public event NodeLoadedEventHandler NodeLoaded;

private readonly IHierarchyProvider<T> _provider;

private bool _stop = false;

private IList<T> _nodePath = new List<T>();

public HierarchyTraverser(IHierarchyProvider<T> provider)

{

_provider = provider;

}

public void Start()

{

var root = _provider.LoadRootNode();

Traverse(root);

}

private void Traverse(T node)

{

if (_stop)

{

return;

}

var isHirarchyNode = _provider.IsHierarchyNode(node);

if (isHirarchyNode && node != null)

{

_nodePath.Add(node);

}

if (node != null)

{

OnLoadNode(node);

}

var children = _provider.LoadChildren(node);

if (children == null)

{

goto end;

}

foreach (var childNode in children)

{

Traverse(childNode);

if (_stop)

{

goto end;

}

}

end:

if (isHirarchyNode && node != null)

{

_nodePath.Remove(node);

}

}

public void Stop()

{

_stop = true;

}

protected void OnLoadNode(T node)

{

var args = new NodeLoadedEventArgs<T>

{

Node = node,

Level = _nodePath.Count

};

NodeLoaded?.Invoke(this, args);

}

}

Some of readers probably want to know why I keep path of nodes in hierarchy traverser class. When exporting documents from repositories then very we have to know also at least some folders in parent folders tree. It’s not needed always but this far there have always been some little evils in details.

SharePoint hierarchy traverser

Here is a simple example about how to use HierarchyTraverser<T> to traverse SharePoint based document repository. Here is sample IHierarchyProvider of SharePoint. Notice how hierarchy details are handled in this class. I think the code is simple enough to understand also for those who doesn’t develop SharePoint solutions.

public class SPHierarchyProvider : IHierarchyProvider<SPFolder>, IDisposable

{

private SPContentTypeId FolderContentTypeId = new SPContentTypeId("0x0120");

private SPSite _site;

private SPWeb _web;

private SPList _list;

public SPHierarchyProvider(string siteUrl, string webUrl, string listName)

{

_site = new SPSite(siteUrl);

_web = _site.OpenWeb(webUrl);

_list = _web.Lists[listName];

}

public IList<SPFolder> LoadChildren(SPFolder node)

{

return node.SubFolders.Cast<SPFolder>().ToList();

}

public SPFolder LoadRootNode()

{

return _list.RootFolder;

}

public bool IsHierarchyNode(SPFolder node)

{

if (node.Item == null)

{

return true;

}

return node.Item.ContentTypeId == FolderContentTypeId ||

node.Item.ContentType.Id.IsChildOf(FolderContentTypeId);

}

public bool HasRootNode { get { return true; } }

#region IDisposable Support

private bool disposedValue = false; // To detect redundant calls

protected virtual void Dispose(bool disposing)

{

if (!disposedValue)

{

if (disposing)

{

_web.Dispose();

_site.Dispose();

}

disposedValue = true;

}

}

public void Dispose()

{

Dispose(true);

}

#endregion

}

In real scenarios we are not done with this simple provider but it is still good starting point.

Suppose we have command line application that traverses some SharePoint document library and prints out folder structure.

class Program

{

static void Main(string[] args)

{

var siteUrl = "http://sp-server";

var webUrl = "/";

var listName = "Documents";

using (var provider = new SPHierarchyProvider(siteUrl, webUrl, listName))

{

var traverser = new HierarchyTraverser<SPFolder>(provider);

traverser.NodeLoaded += SharePointNodeLoaded;

traverser.Start();

}

}

static void SharePointNodeLoaded(object sender, NodeLoadedEventArgs<SPFolder> e)

{

Console.Write(new String(' ', e.Level - 1).Replace(" ", " "));

if (e.Node.Item == null)

{

Console.WriteLine("Root folder");

return;

}

Console.Write(e.Node.Item.Title);

Console.Write(", files count: ");

Console.WriteLine(e.Node.Files.Count);

}

}

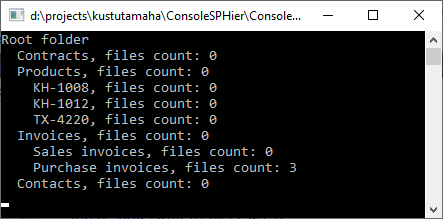

This is sample output of this console application.

NodeLoaded event is the integration point where calls to document exporting functionalities are made.

SQL Server hierarchy traverser

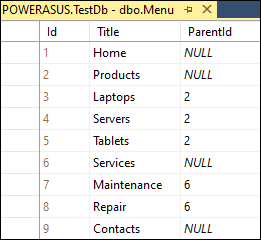

Another example is SQL Server database with menu table. There is no root folder and all menu items on first level just doesn’t have parent menu items set.

For database I wrote generic hierarchy provider that is not bound to any specific database server. The code is a bit long but it’s actually easy to understand. Notice how this provider uses null as root node.

public class DatabaseHierarchyProvider : IHierarchyProvider<DataRow>

{

private readonly IDbConnection _connection;

public DatabaseHierarchyProvider(IDbConnection connection)

{

_connection = connection;

}

public bool HasRootNode { get { return false; } }

public bool IsHierarchyNode(DataRow node)

{

return true;

}

public IList<DataRow> LoadChildren(DataRow node)

{

using(var command = GetCommand(node))

{

var table = new DataTable();

table.Load(command.ExecuteReader());

return table.Rows.Cast<DataRow>().ToList();

}

}

private IDbCommand GetCommand(DataRow node)

{

if (node == null)

{

return GetRootItemsCommand();

}

return GetChildItemsCommand((int)node["Id"]);

}

private IDbCommand GetRootItemsCommand()

{

var sql = "SELECT * FROM Menu WHERE ParentId IS NULL";

var command = _connection.CreateCommand();

command.CommandText = sql;

return command;

}

private IDbCommand GetChildItemsCommand(int id)

{

var sql = "SELECT * FROM Menu WHERE ParentId = @Id";

var command = _connection.CreateCommand();

command.CommandText = sql;

var param = command.CreateParameter();

param.ParameterName = "@Id";

param.Value = id;

command.Parameters.Add(param);

return command;

}

public DataRow LoadRootNode()

{

return null;

}

}

To see database hierarchy provider in action we have to write simple program that uses it. Following example uses this provider to print out menu structure to console windows.

class Program

{

static void Main(string[] args)

{

var connectinString = "My connection string";

using (var connection = new SqlConnection(connectinString))

{

connection.Open();

var provider = new DatabaseHierarchyProvider(connection);

var traverser = new HierarchyTraverser<DataRow>(provider);

traverser.NodeLoaded += DatabaseNodeLoaded;

traverser.Start();

}

}

static void DatabaseNodeLoaded(object sender, NodeLoadedEventArgs<DataRow> e)

{

Console.Write(new String(' ', e.Level - 1).Replace(" ", " "));

Console.WriteLine(e.Node["Title"]);

}

}

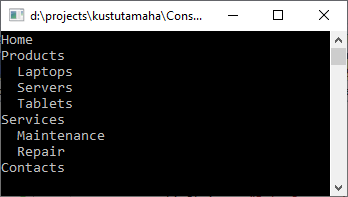

This is the output we will see on console when running the application.

Wrapping up

I started with annoying problem. I wanted to separate hierarchy traversal from business logic of document importer and I ended up with this nice and general hierarchy traverser solution. It’s a good example about how creating order to chaos may lead to even more order if we make some more steps ahead. Hierarchy traverser presented here is good fit for almost all types of hierarchies as it doesn’t depend directly on technical details of any hierarchy.

Recursive algorithms, while easier to write and reason with, are bad for any kind of tree or graph traversal.

Consider making it totally sequential, e.g. by using a queue or a stack collection to track progress.

It doesn’t matter performance-wise is it recursion or stack. .NET runtime supports optimizing of tail-call recursion for years. Even if disassembly of compiled code shows you recursion being still there it is optimized out when run-time executes the code.

Currently reading on a small phone display, but from what I can see, your Traverse() call is not tail recursion, you still have some local state to deal with after the recursive call in the form of the isHirarchyNode local variable as well as your call to remove the current node from the _nodePath.

Please forgive me if I am missing something simply because I keep scrolling up and down and left and right to read the code.

Now that I’m on real machine, I’ve had a chance to take a closer look at the code and play with it. Yup, no tail recursion optimization happening for me. I get a StackOverflowException. (Yes, I am compiling for x64 in Release mode with optimizations turned on. I read that the tail recursion optimization is not implemented for x86.) Here’s my provider and Main() if you want to try it for yourself:

class IntHeirarchyProvider : IHierarchyProvider

{

public int MaxDepth { get; private set; }

public IntHeirarchyProvider(int maxDepth) => MaxDepth = maxDepth;

public bool HasRootNode => true;

public bool IsHierarchyNode(int node) => true;

public IList LoadChildren(int node) => node < MaxDepth ? new List() { node + 1 } : null;

public int LoadRootNode() => 1;

}

class Program

{

static void Main(string[] args)

{

var traverser = new HierarchyTraverser(new IntHeirarchyProvider(100000));

traverser.NodeLoaded += (s, e) => { if (e.Node % 1000 == 0) Console.WriteLine(e.Node); };

traverser.Start();

}

}

Thanks for detailed feedback, Ants. It seems like I have some show stopper somewhere in details. Although in practice I probably don’t meet document repositories with such a depth of hierarchy I made modifications to my solution and I will publish updates over coming few days. Thanks again!

It’s a nice first implementation but there are some things that I would point out in a code review:

– tail recursion is neither guaranteed by c# nor the CLR. Yes it’s implemented in a somewhat limited form by ryujit, but there’s many other implementations that don’t support it at all. Instead of rewriting it (which isn’t hard, there are simple algorithms out there that you simply follow by hand to get an iterative solution) you could also provide a depth limit – not as pretty, but a pragmatic solution sometimes.

– It shows that you started your interface design while thinking about SharePoint and then added the database implementation later. Instead of using hacks with null for getting the root node, you should realize that you’re not dealing with a tree here, but with the more general concept of a forest. Making your LoadRootNode() return a list of root nodes would get rid of the ugly nulls and simplify the code (no more HasRoot for one).

-IsHierarchyNode would probably fit better on the node class. The general concept itself is also not clear to me – it seems rather specific to SharePoint and not the general problem of traversing a hierarchy of nodes. Users of the interface can easily filter the node list in whatever way they like.

-LoadChildren: Apart from situations where there is a need to distinguish between null and an empty list, it’s generally much clearer to forbid null as a return value (that is how LINQ is also designed to work).

Still fun code to read on my morning commute :)

Thanks for professional advice, Daniel! I highly appreciate it.

Yes, this is my first implementation and I’m still not very sure how to work out some details in design. Besides the fact of hierarchy traversal I’m also considering some needs from real-life cases that hierarchy traverser should support by default.

You are also right pointing out that SharePoint is main driver of this early work. I’m trying to fit in similar case from short near-history where data was pumped out directly from SQL Server database. It was less pain because there were not much issues with back-end infrastructure and this is why SharePoint considerations were first.

I will publish some new code over coming few days where design is better and where some practical features are added.

My refactoring of the code above can be found at: https://gist.github.com/azureskydiver/273b053d7e43c8de19e0658c35f0eae1

Ants, I can confirm it’s running to StackOverflowException even when call to Traverse() in loop is the last line Traverse(). I played traversing around to Stack and got something similar result as you did. On logical level, of course, as I cannot handle over “too professional” code to young devs without wrapping it to some simple API. Anyway your code makes more sense to me than my own code :)

My code grew more complex as I have to keep nodes in wrapper class there are also some benefits doing so. I can give level of current node to wrapper and – if needed – also reference to parent node. These two things are actually important. For some exports the structure of repository is logged to flat file and levels decide logical type of node in document management system. References to parent nodes can also be important but it’s still a little bit grey area for me. The question is – is there any point of holding such a cache at wrapper level or make it task of hierarchy provider?

But moving to Stack made hierarchy traversing pass your StackOverflowException test. Thanks for help! :)

Yeah, I’ve been down that road before of having to pull data from SharePoint recursively and preserve most of the structure, but also do some special stuff for Document Libraries and Lists which have versioning enabled. I needed to store the metadata someplace, as well as need to have access to some of the “parent” information to be able to make the appropriate SharePoint object model calls. I think at the time, I settled on just storing a URL in my “wrapper” objects and just paying the price of re-opening the appropriate object if/when needed. It ended up taking less memory than keeping a reference to a live SharePoint object (as well as kept reading the output of the SPDisposeCheck runs more manageable).

In the code in my gist, it will (unfortunately) keep references to the parent objects alive as a result of adding them to the Path IEnumerable. I figured that it wouldn’t be any worse off than what you were doing with your _nodePath list.

Like you, I did also consider using something like a NodeWrapper like

struct NodeWrapper {

public T Node { get; private set; }

public T Parent { get; private set; }

}

Each level shares the same instance of the IEnumerable Path. I did try to be careful not to actually enumerate Path. I can see though, that it seems like the current level or depth is important to you. Previously you were computing that by using the the length of the _nodePath list. With my gist, calling Count() on the Path would likely be expensive, so perhaps I need to keep track of the current level/depth in the NodeInfo structure. Alternatively, if I realize that I really need to walk the Path back up the tree often, I’ll likely re-examine the NodeWrapper idea again.

Out of curiousity, how exactly did you rewrite your Traverse() to force tail recursion? I can see how to rewrite it to have a foreach() at the bottom of the method something like:

void Traverse(T node, …)

{

:

foreach(var child in children)

Traverse(child, …);

}

But fortunately, that still isn’t tail recursion, because there is still local state for the IEnumerator being maintained by the foreach. Each recursive call would have needed to return to be able to call IEnumerator.MoveNext() of the parent level.

Anyway, it was great reading your blog.

I will publish optimized version with my next writing. I hope to get it done and online tomorrow.

Parent path is tricky business. My current projects allow me to keep parent nodes locally in memory. Be it some list in class scope or something else. SharePoint can be damn tricky on database communication. When asking parent folders it makes round-trips to database although parents were already loaded. Without caching parent nodes I saw massive drop in performance. This is something were I must be damn picky as exports also need document conversions and the number of documents – depending on repository – can be 200K-600K (this is currently).

Here I get to the point where I can say that parent node caching is important feature. Even if it’s not about SharePoint then infrastructure on its edge of capabilities will raise the same demand – make minimal number of database or API roundtrips.

I think at least part of parent nodes loading will end up in hierarchy provider. It would be better because then hierarchy traversing has one responsibility less and it’s easier to implement continue-after-crash-where-you-were feature. It should be optional feature as some exports doesn’t need it or can’t use it due to nature of failures (after crash start from beginning).

After hierarchy traversing I have next interesting scenario to support – burst mode exports.

In the end of this small (like it was planned) saga I can probably write a book :)

Looking forward to it.

In the meantime, I update my gist with just holding on to the Parent chain, but still have to ability to reconstruct the path from the root down on demand. Also a code reorg because I didn’t like having both breadth first and depth first implementations within the same static class. Now they are into two concrete subclasses which makes the look a little cleaner, as well as makes unit testing a bit easier.

I think you could turn this into a nice generic pattern, and use an Adapter to traverse any hierarchy, regardless of the implementation of what you’re traversing, but you need one more feature.

You assume that the root node is always visited first (prefix). In fact, it could be that I want it visited last (postfix) or even somehow in the middle (infix). So, I’d like to see this in your implementation.

Handling prefix vs. postfix is easily done. Infix almost has to be left to the traversed class. Perhaps you could do that with an IsReadyToVisitRoot method.

Of course, the example I’m thinking of is an expression tree, where I usually want to visit the operator after visiting the left operand, but before visiting the right operand. In a more generic hierarchy with a root node and a list of children, I’m not sure how you visit the root node in the middle, hence my thought of the IsReadyToVisitRoot. That seems awkward, though. Maybe there’s not a clean & elegant solution to this.

In the end there must be few layers of API anyway. I mean something for those developers who just need working component where they can plug their functionalities and something for those who want to extend or tweak things more. But let’s go with one step at time. It otherwise grows too big too fast.

Here wo go, new improved version of hierarchy traversing is here: https://gunnarpeipman.com/sharepoint/traverse-hierarchy-optimized/ Enjoy!

I believe that the *32-bit JITer* will usually implement tail calls for 32-bit *optimized* code – so make sure to force 32 bits and full optimization/release build.

In F#, TCO should always work.

As noted by another poster, a better solution is to not use recursion, but an explicit stack. This has many advantages, including:

– Saving your state so you can resume later

– Being able to do an occurs-check to make sure that the client interface isn’t going send you into an infinite loop by really providing a graph rather than a tree

– Easier debugging

– Able to explore alternative, non-depth first strategies for expanding items, by replacing the stack with a priority queue, for example

Another option of course is to convert the recursive code to use continuation-passing style, as CPS will “lift” the recursion out.

MBR: I’m not seeing the 32-bit JITter with optimizations enabled doing TCO on the following CPS style code:

static void Main()

{

CountDown(500000, x => { if (x % 1000 == 0) Console.WriteLine(x); });

}

static void CountDown(int n, Action k)

{

k(n);

if (n > 0)

CountDown(n – 1, x => k(x));

}

I did see TCO being done by the 64-bit JITer with optimizations enabled.

After some digging for information I got better picture of situation:

1. CLR supports tail-call optimizations and as I understood it doesn’t matter if it’s 32bit or 64bit

2. C# compiler doesn’t emit op-code that enables tail-call optimization, F# compiler does

3. We can enable optimizations if we like but then it will be IL level hacking in compiled assemblies

I don’t like option 3 although it’s damn interesting :)